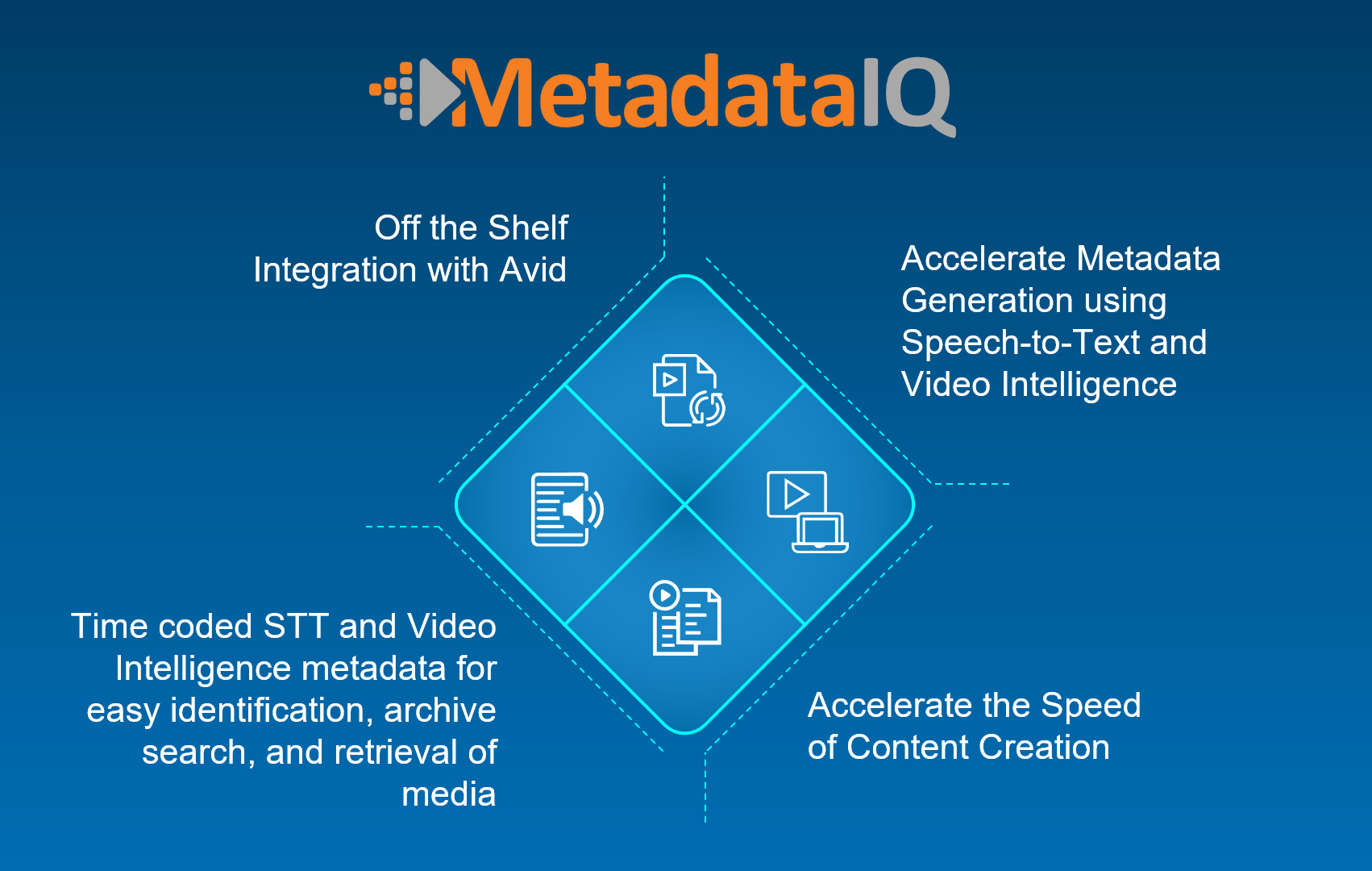

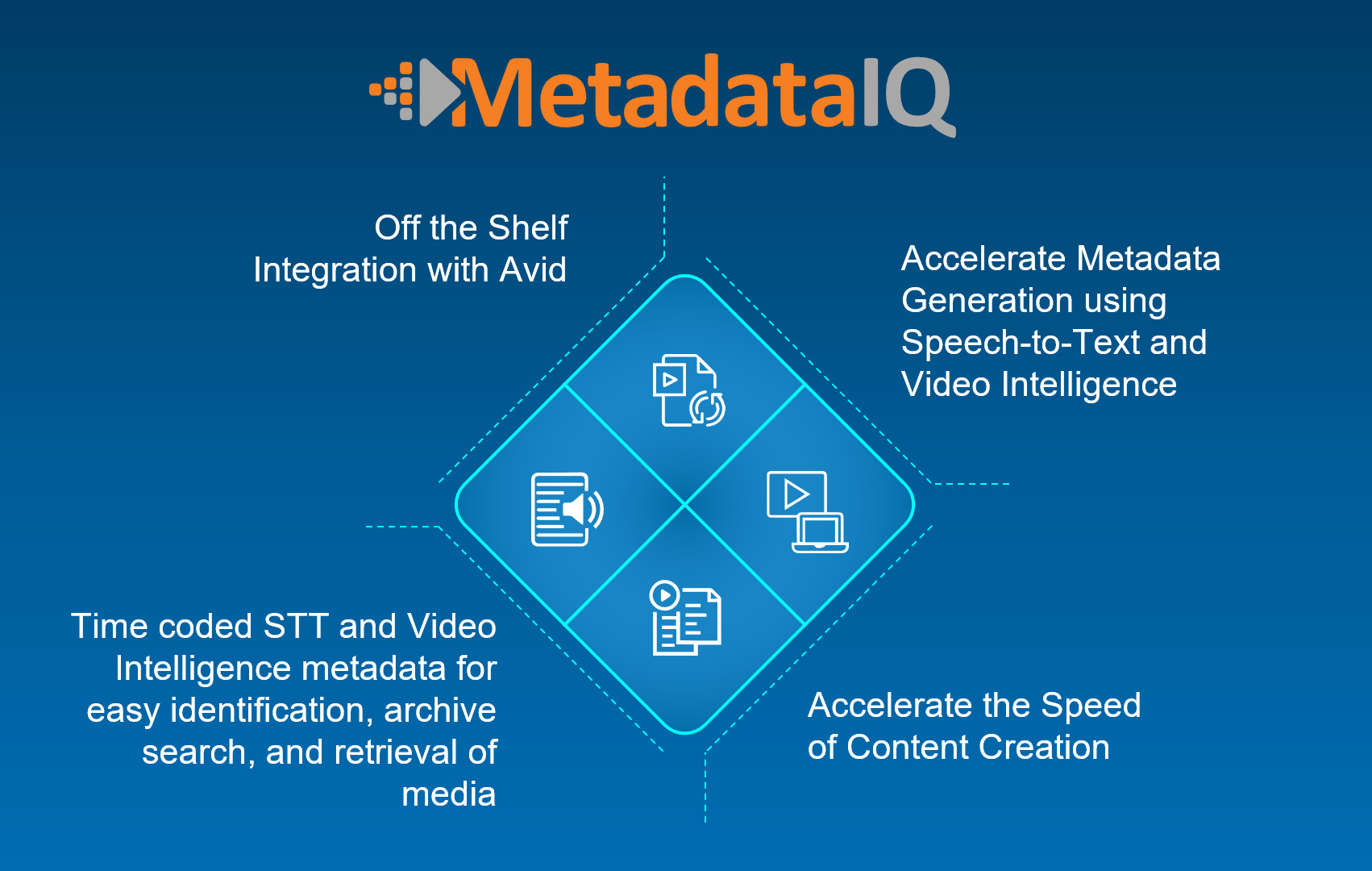

Digital Nirvana, a provider of leading-edge media monitoring and metadata generation services, today announced that MetadataIQ, its SaaS-based tool that automatically generates speech-to-text and video intelligence metadata, now supports Avid CTMS APIs. As a result, video editors and producers can now use MetadataIQ to extract media directly from Avid Media Composer or Avid MediaCentral Cloud UX (MCCUX) rather than having to connect with Avid Interplay first. This capability will help broadcast networks, postproduction houses, sports organizations, houses of worship, and other Avid users that do not have Interplay in their environments to benefit from MetadataIQ.

Digital Nirvana, a provider of leading-edge media monitoring and metadata generation services, today announced that MetadataIQ, its SaaS-based tool that automatically generates speech-to-text and video intelligence metadata, now supports Avid CTMS APIs. As a result, video editors and producers can now use MetadataIQ to extract media directly from Avid Media Composer or Avid MediaCentral Cloud UX (MCCUX) rather than having to connect with Avid Interplay first. This capability will help broadcast networks, postproduction houses, sports organizations, houses of worship, and other Avid users that do not have Interplay in their environments to benefit from MetadataIQ.

Previously, only Avid Interplay users were able to employ MetadataIQ to extract media and insert speech-to-text and video intelligence metadata as markers within an Avid timeline. Now, all Avid Media Composer/MCCUX users will be able to do this. They will also be able to:

• Ingest different types of metadata, such as speech to text, facial recognition, OCR, logos, and objects, each with customizable marker durations and color codes for easy identification of metadata type.

• Submit files without having to create low-res proxies or manually import metadata files into Avid Media Composer/MCCUX.

• Automatically submit media files to Digital Nirvana’s transcription and caption service to receive the highest quality, human-curated output.

• Submit data from MCCUX into Digital Nirvana’s Trance product to generate transcripts, captions, and translations in-house and publish files in all industry-supported formats.

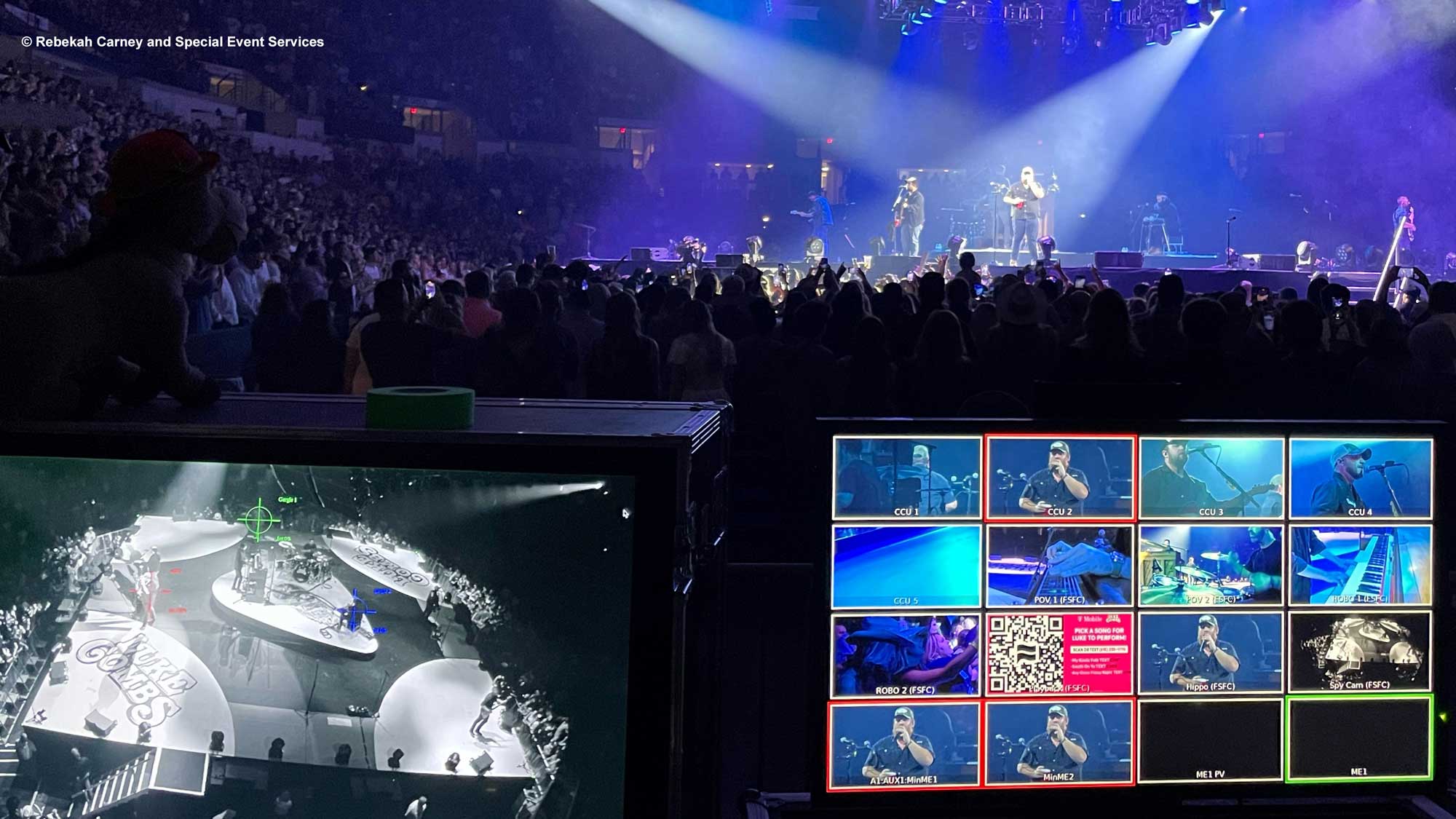

When Luke Combs finally hit the road again for his What You See is What You Get tour in 2021, he was happy to be back in the spotlight after the collapse of his 2020 tour due to the pandemic. But this time, the spotlight will be a Follow-Me 3D Six system, which is being supplied by Special Event Services (SES) of Mocksville, NC, and Nashville, TN.

When Luke Combs finally hit the road again for his What You See is What You Get tour in 2021, he was happy to be back in the spotlight after the collapse of his 2020 tour due to the pandemic. But this time, the spotlight will be a Follow-Me 3D Six system, which is being supplied by Special Event Services (SES) of Mocksville, NC, and Nashville, TN.

Since its founding in 2001 by pastor Chris Hodges and a core group of 34 people, the growth of Church of the Highlands has been nothing short of astounding. In that time, the multi-site megachurch has grown to two dozen campus locations, mostly in central Alabama and centered around Birmingham. In the process, it has become the second-largest church in the United States, with an average of over 43,000 attendees each week.

Since its founding in 2001 by pastor Chris Hodges and a core group of 34 people, the growth of Church of the Highlands has been nothing short of astounding. In that time, the multi-site megachurch has grown to two dozen campus locations, mostly in central Alabama and centered around Birmingham. In the process, it has become the second-largest church in the United States, with an average of over 43,000 attendees each week. Legrand’s

Legrand’s Digital Nirvana, a provider of leading-edge media monitoring and metadata generation services, today announced that MetadataIQ, its SaaS-based tool that automatically generates speech-to-text and video intelligence metadata, now supports Avid CTMS APIs. As a result, video editors and producers can now use MetadataIQ to extract media directly from Avid Media Composer or Avid MediaCentral Cloud UX (MCCUX) rather than having to connect with Avid Interplay first. This capability will help broadcast networks, postproduction houses, sports organizations, houses of worship, and other Avid users that do not have Interplay in their environments to benefit from MetadataIQ.

Digital Nirvana, a provider of leading-edge media monitoring and metadata generation services, today announced that MetadataIQ, its SaaS-based tool that automatically generates speech-to-text and video intelligence metadata, now supports Avid CTMS APIs. As a result, video editors and producers can now use MetadataIQ to extract media directly from Avid Media Composer or Avid MediaCentral Cloud UX (MCCUX) rather than having to connect with Avid Interplay first. This capability will help broadcast networks, postproduction houses, sports organizations, houses of worship, and other Avid users that do not have Interplay in their environments to benefit from MetadataIQ. Pliant Technologies

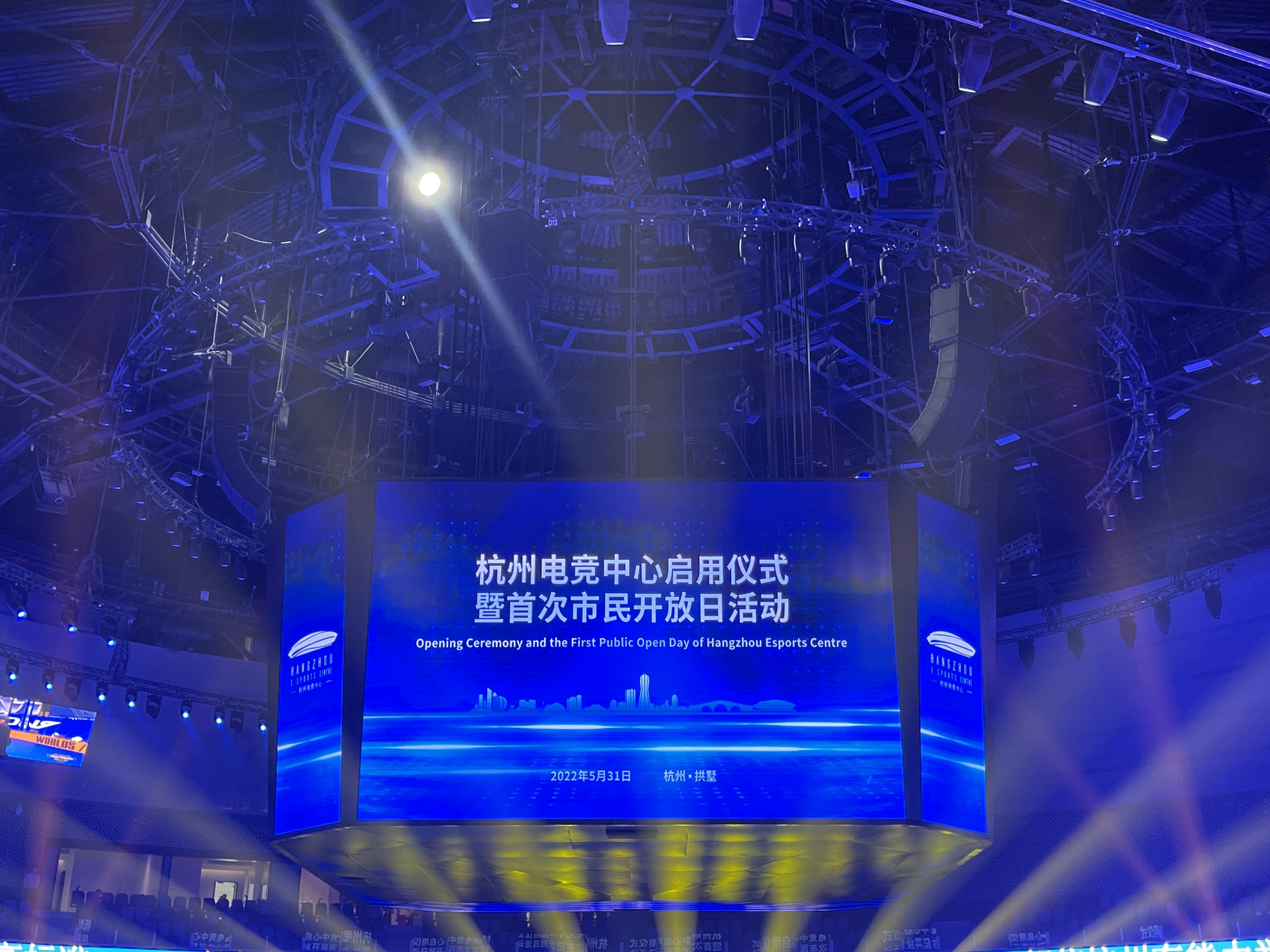

Pliant Technologies Hangzhou Esports Center, the first professional esports venue in China, recently opened its doors to esports professionals. The starship-shaped venue is the perfect fit for the futuristic and science-fiction feel of esports, but due to the large event space with a long reverberation time, it was a challenge to find an audio system that could achieve the extremely high requirements for esports competitions.

Hangzhou Esports Center, the first professional esports venue in China, recently opened its doors to esports professionals. The starship-shaped venue is the perfect fit for the futuristic and science-fiction feel of esports, but due to the large event space with a long reverberation time, it was a challenge to find an audio system that could achieve the extremely high requirements for esports competitions. BenQ

BenQ AES 2022 sees

AES 2022 sees  IHSE USA today announced it will exhibit at NAB Show New York, booth 920, Oct. 19-20 at the Javits Center. As the leading solutions provider for high- performance KVM systems, the company will focus its product presentations on market segments ranging from high-frame-rate (HFR) eSports extenders to low-cost KVM desktop solutions that support up to four computers with single, dual, triple, quad, or even five displays for each computer connected to the switch. Also on deck for the show is the company’s new 4K-over-IP extenders offered through the kvm-tec product line.

IHSE USA today announced it will exhibit at NAB Show New York, booth 920, Oct. 19-20 at the Javits Center. As the leading solutions provider for high- performance KVM systems, the company will focus its product presentations on market segments ranging from high-frame-rate (HFR) eSports extenders to low-cost KVM desktop solutions that support up to four computers with single, dual, triple, quad, or even five displays for each computer connected to the switch. Also on deck for the show is the company’s new 4K-over-IP extenders offered through the kvm-tec product line. Legrand’s

Legrand’s